AI’S TYRANNY

A discussion of the way that AI is being introduced into processes not only to remove humans but also to isolate neurodivergent people.

By: Phoenix McElroy

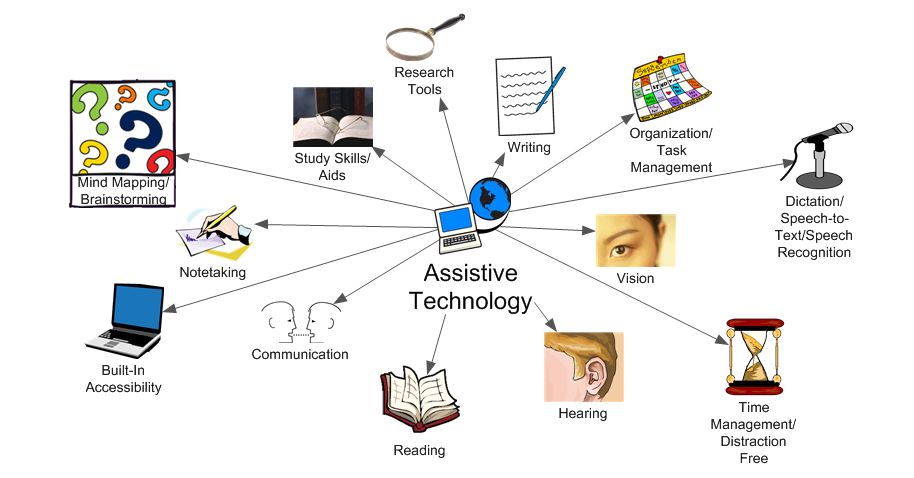

There have been a lot of developments in the areas of technology and neurodivergent research as society continues to strive toward technologic advancement. Engineers and scientists have started to build technology for the specific purposes of both helping neurodivergent and disabled people physically, but also mentally (Iannone & Giansanti 2023). A sector of the technologies being manufactured are known as assistive technologies, or ATs. These can range from tools for sensory management like specific noise-canceling headphones, to communication with AAC apps and speech-to-text technologies. These technologies “play a fundamental role in improving the lives of people with autism by addressing the communication challenges that often accompany this disorder” (Iannone & Giansanti 2023). The high speed growth of AI has led to its integration into technology all over, but more specifically ATs. Scientists want to incorporate AI into ATs because they believe it will make them more suitable for an individual’s needs. They want to be able to make the technology people are using more adaptable and better used for the individual.

In theory, this is a good idea that could help a large group of people who tend to be alienated from society, but it also brings up concerns on how individuals might be more likely to be taken advantage of. The introduction of AI into technology is being used as a corporate tactic to upcharge for products. In the healthcare system they’ve introduced AI-powered authentication systems which will decide whether coverage is available for someone or not. On many occasions these tools have failed leading to many people not gaining proper coverage. Companies are also using it to upcharge for their plans by 10 to 15 percent (Stancil 2026). If they follow this same procedure when introducing AI into ATs they will be tricking people into buying high priced items that appear to work and adapt to the individual for more than they’re actually worth. They will actively be forcing neurodivergent people to pay more for tools that are necessary to their existence that won’t even benefit them in the way they should. This situation uplifts ideas of capitalism over the livelihoods of the individuals who need the technology, therefore companies are using neurodivergent people for their own gain.

AI isn’t just being incorporated into ATs but also directly into the diagnosing process for neurodivergent individuals. Researchers are trying to build models that will be able to recognize the possible signs of autism. The way they build these models is that they have people who are neurodivergent communicate with the model and mark traits that are associated with autism or ADHD from the models internal files. The goal of this would not be to incorporate AI into a larger structure like ATs but to completely erase the necessity for a human to be part of the diagnosing processes. What they found though in these models is that they started to create 2 categories for certain diagnoses. For example, for autistic individuals it was working with, it categorized things into “ASD” (Autism Spectrum Disorder) and “non-ASD” categories (Hayward 2022). What this did to the model overtime was specify it so much to the point where it no longer could recognize who was really neurodivergent or not. Eventually, it created its own form of stereotypes and put people into certain boxes because of what it was learning. These models also tended to not have any scientific backing when they achieved a diagnosis which is why “models remain poorly interpretable, reducing clinical applicability” (Jiang 2025). This perpetuates the ideas already existing in society that separate neurodivergent and neurotypical people and cause heightened discrimination for the former. These model experiments also occurred when incorporating AI into ATs. AI is shown at this point and time to be unreliable. For the user there is a high lack of control, and when these models know more and more, they become so ultra-specific to the point where they no longer work for their intended usage (Hayward 2022). This also negates the nuance that a person active in the diagnosing process would be able to offer. All introducing AI does is put neurodivergent people farther into boxes that they do not want to be part of.

What’s also an important aspect of these conversations is that in the majority of studies concerning neurodivergency and technology, the research lacks any direct experience of the neurodivergent people involved. A group of researchers looked into this phenomenon and found that 93% of studies regarding this topic fail to include any experience of the neurodivergent individual (Hawkins 2024). So rather than looking at the actual effects of their technology on the people they’re trying to help, they are specifically looking at the technology itself completely negating the human experience that is vital to making this technology actually necessary. Many studies claim to be “revolutionizing autism,” but what they’re actually doing by creating this technology is trying to make people who are neurodivergent more “normal”. They claim these AI centered robots will “appeal more to children than human therapists” (Hawkins 2024). Which from a psychological perspective is not useful to neurodivergent people, and it will only make their experiences in life worse, and force them to feel like they have to mask more often.

The common behaviors the programs try to eliminate are not harmful, but rather seen as “breaking societal standards”. These include flapping, rocking, and repeated hand motions, which are used as self-soothing techniques for these individuals or the embodiment of their positive emotions (Hawkins 2024). The data the models are “‘trained’ on are inherently biased, so are the resulting algorithms”(Hawkins 20240. Since the technologies are only aware of behaviors that are “normal” they unknowingly demonize anything else that lies beyond their code with no ability to recognize nuance. ATs and diagnosing are a very important process for a neurodivergent person and should not be taken lightly. They can be life-changing for a lot of people, but only when there is no forced assimilation into neurotypical societal ideals. Even if AI systems were trained differently, their code wouldn’t allow for acceptance of the full spectrum of neurodivergence. Introducing technology like AI into these processes will not allow for the nuance of the neurodivergent experience to be taken seriously, because models will slowly lose their ability to actually work and instead become polarized or ultra-specific.