CONCLUSION

AI CHECKER RELIEBILITY

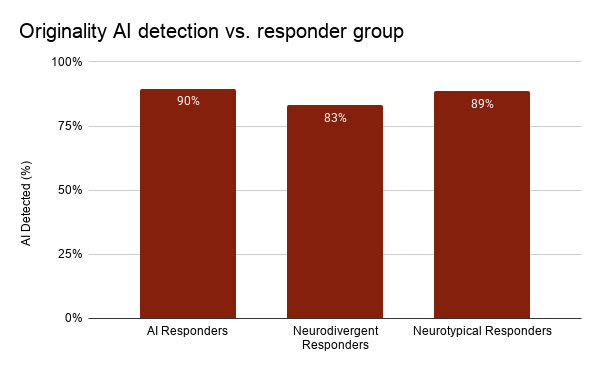

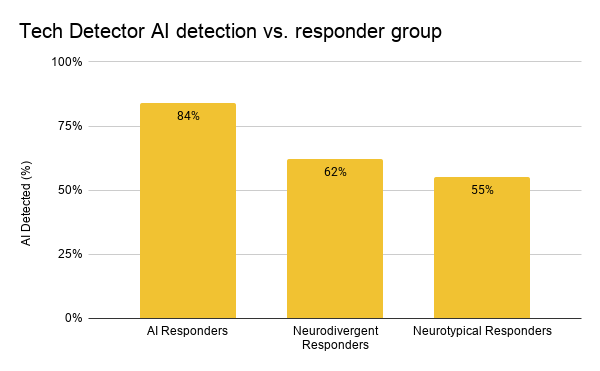

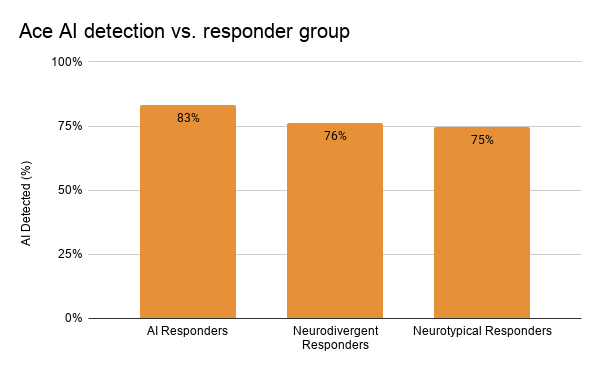

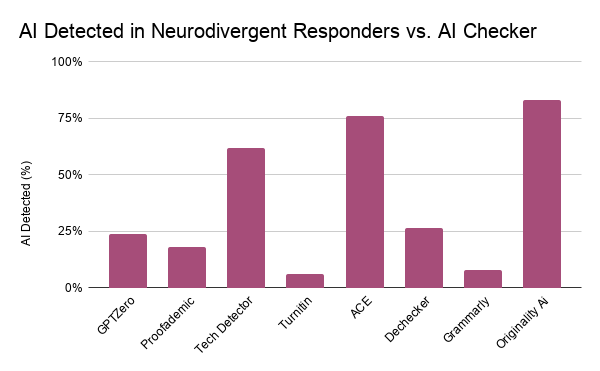

Here are the checkers and graphs that I believe correlate to unreliability over neurodivergent targeting:

These are the results that I find most useful on a user basis. Across every single graph, outside of Originality AI Detection vs. Responder Group, there is a clear pattern of neurodivergent people having a higher average of AI detection in their work. Now it does differ across every single detector whether it is a problem of reliability or discrimination. In the Original AI graph there is a higher average of detection in neurotypical responders but this is only 1% lower than those of AI responders. Overall each type responder lies within a 7% difference. This means this AI detector isn’t specifically targeting neurodivergent people but instead isn’t reliable for anyone as it has a high likelihood to say there is AI present no matter what. This is also very similar for Tech Detector and Ace. While they both show a higher average for neurodivergent people, the average for all groups are a lot closer, which is why their reliability as programs is more likely the problem above all else.

Before I analyze the other graphs I wanted to discuss the reason it might be good for an AI detector to want to overwhelmingly detect things as AI. A lot of these AI detectors are either locked behind a paywall or have features locked behind one. These features usually include a breakdown of which exact sentences are AI along with a humanizer feature as well. These humanizer features claim to humanize AI generated writing so that it can’t be detected by AI detectors. These features are the reason an AI detector might want to claim consistently their is AI, because if they do people are more likely to want their other features and pay them money. While this isn’t strictly a problem only for neurodivergent people it does show how AI integration is being used to take advantage of people’s lack of knowledge so big corporations can make more money off unreliable products. This directly connects to how ATs (assistive technologies) that I previously discussed might have AI integration not out of technological advancement for the good of the people but instead to perpetuate capitalistic ideals. These ideals target groups of people who require assistance because they have no choice but to pay more money for the label of “AI Integration” out of necessity.

AI CHECKER NEURODIVERGENT TARGETING

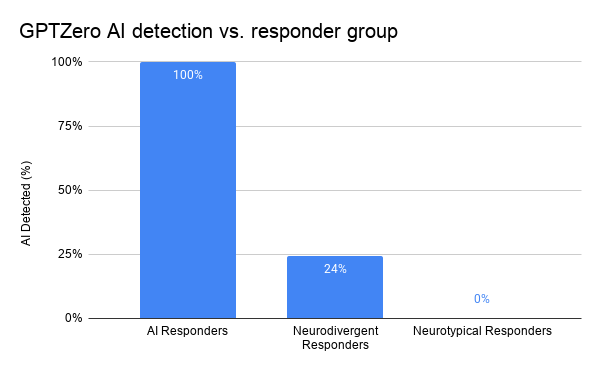

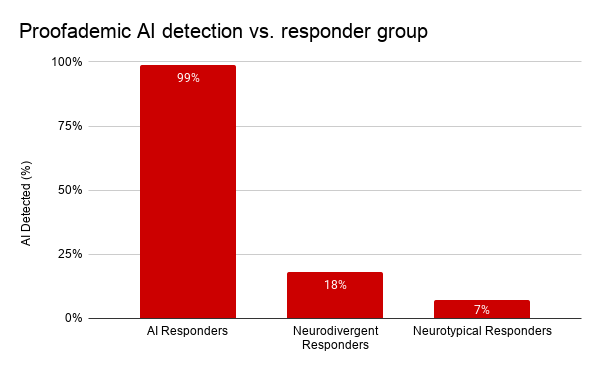

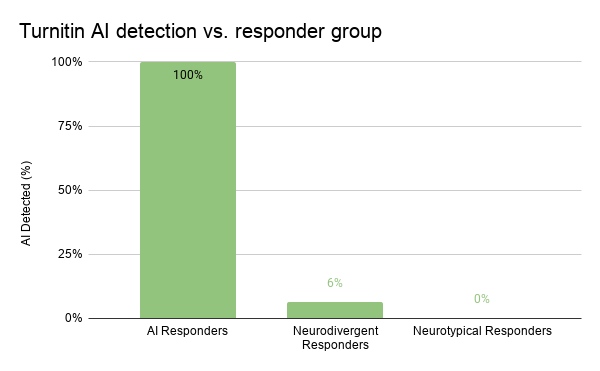

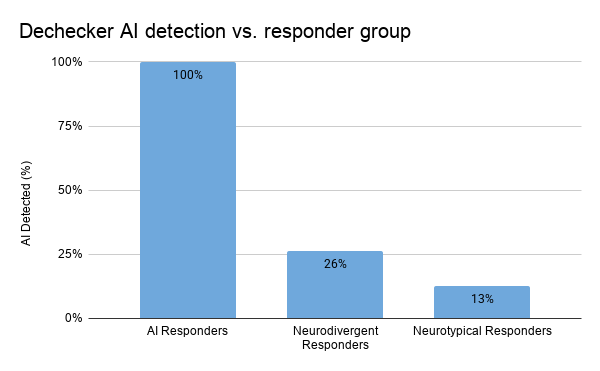

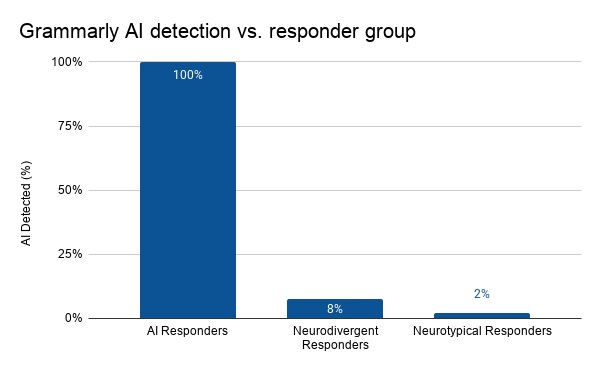

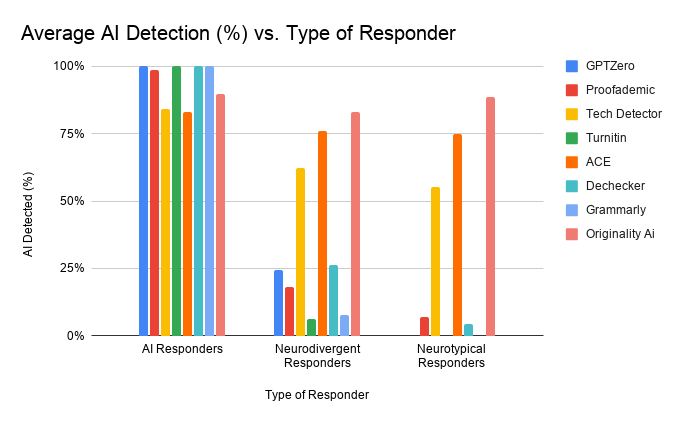

Here are the checkers and graphs that I believe correlate to the ways in which neurodivergent people are more likely to be targeted by false positives:

These graphs are the AI detectors that seem to be reliable because they almost always mark the AI correctly and the average % for other responders isn’t unusually high. These are also the detectors that are the first ones to come up when searching for reliability. They all differ on the amount they target neurodivergent people with GPTZero having the biggest difference between neurodivergent and neurotypical responders. The others all mark neurodivergent responders more commonly but the more reliable they get the less the difference appears to be. Out of these Turnitin appears to have the most reliability with the lowest false positive average, but the existence of the false positive at all is still concerning. All of these could lead to people falsely being accused and facing serious repercussions, especially because Turnitin is one of the most common LMS systems used in schools

Here you can see what detection service is more problematic for neurodivergent individuals and works in tandem with the ones about each individual service. Overall these graphs serve as ways to recognize which checkers are more harmful but also proves all checkers have a bias. This bias is clearly unavoidable and no matter which AI checker used it is possible to get a false negative. Simply the possibility of a false positive should be enough to stop schools from using them as it will only lead to more isolation. Neurodivergent people already have a hard time in schools where accommodations are hard to come by and they shouldn’t be punished for other people using AI. There should instead be education on the real ways AI can be spotted by educators and a positive way AI usage can be handled in school settings.

This graph serves as a way to view the AI checkers that are outliers for their lack of reliability as well as a clear view of how neurodivergent people are consistently more targeted. In the neurodivergent responders there is a clear correlation between higher averages of false positives because neurotypical responders don’t have nearly as many. Also the ones that aren’t reliable also don’t have 100% averages for AI generated content either, which shows clear fault in the AI models meant to detect AI.

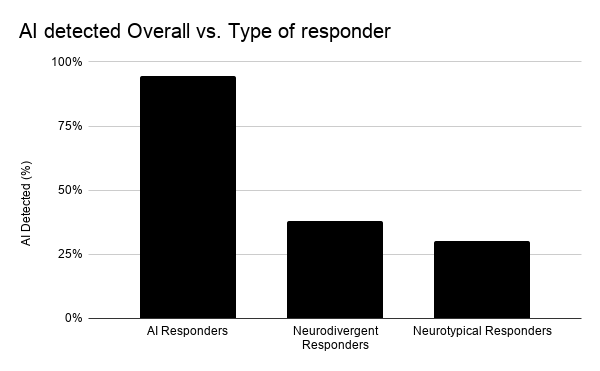

This is the final graph that I created as a way to see a clear correlation across all services. There is a clear different rate of false positives in the neurodivergent column which I believe would be even clearer if the outliers were removed from the this data. They way to improve a lot of this data would have been to test reliability separately first for AI vs. Human respondents, then weed out the ones that were unreliable. Having this data prior to the rest would have allowed me to specialize the data even further. In the end though this still acts as a clear distinction of the way AI is harmful to the neurodivergent population as a whole.

FINAL COMMENTS

This study ended up making the problem with AI detection much more clear than I thought it would. I was hopeful to be able to find my own results but I didn’t know how inaccurate AI checkers truly were. In the future of similar studies I would find more ways to specify my data, as well as have more respondents I could study for each individual diagnoses that falls under the neurodivergent umbrella. This way I could create an in depth analysis of which diagnoses are more likely to be targeted and hopefully create a clear connection between classroom experience and AI’s systematic issues. I also think a further study to test educators on their ability to spot various levels AI usage could be helpful in giving educators a clear tool so they wouldn’t have to rely on things that simply appear to be useful.

In conclusion there is a clear and problematic pattern AI detectors have of targeting neurodivergent writers. This alienation happens simply because of traits neurodivergent people are more often to hold especially in their writing styles. Their writing styles are usually a lot more pattern driven and because AI is similar it is more likely to falsely accuse them. I hope this can act as proof, even informal proof, of how AI detection should not be used. While this study was done on a small scale, even on a small scale it produced alarming results. There are many ways to limit AI usage and stop it from perpetuating harmful divisions among society. The most clear way forward is through regulation. Right now very few regulations exist to stop AI usage and availability. If some were implemented it could reduce AI’s availability to the public and hopefully require more tests to be done to ensure it’s being used in a positive manner. In the end this may not seem like a problem to the majority of society, but the problem is only beginning, it will eventually make its way into every aspect of our lives, unless we collectively put a stop to it.